Stories

Two decades ago, APIs were simple bridges. They connected systems, moved data, and exposed functionality. Governance at the time meant checking off boxes: documentation, security, naming conventions, and version control. You could look at a dashboard, see the endpoints, and feel confident that everything was under control.

Fast forward to today. Autonomous AI Agents (AI systems that can reason, plan, and act) have entered the scene. They can orchestrate multiple APIs, call services without human supervision, and even interact with each other. The world of deterministic, endpoint-focused governance has collided with a world of behavior, autonomy, and emergent complexity.

The result? Organizations are waking up to a reality they didn’t fully anticipate: the rules that once worked are no longer enough.

The Invisible Web of Action

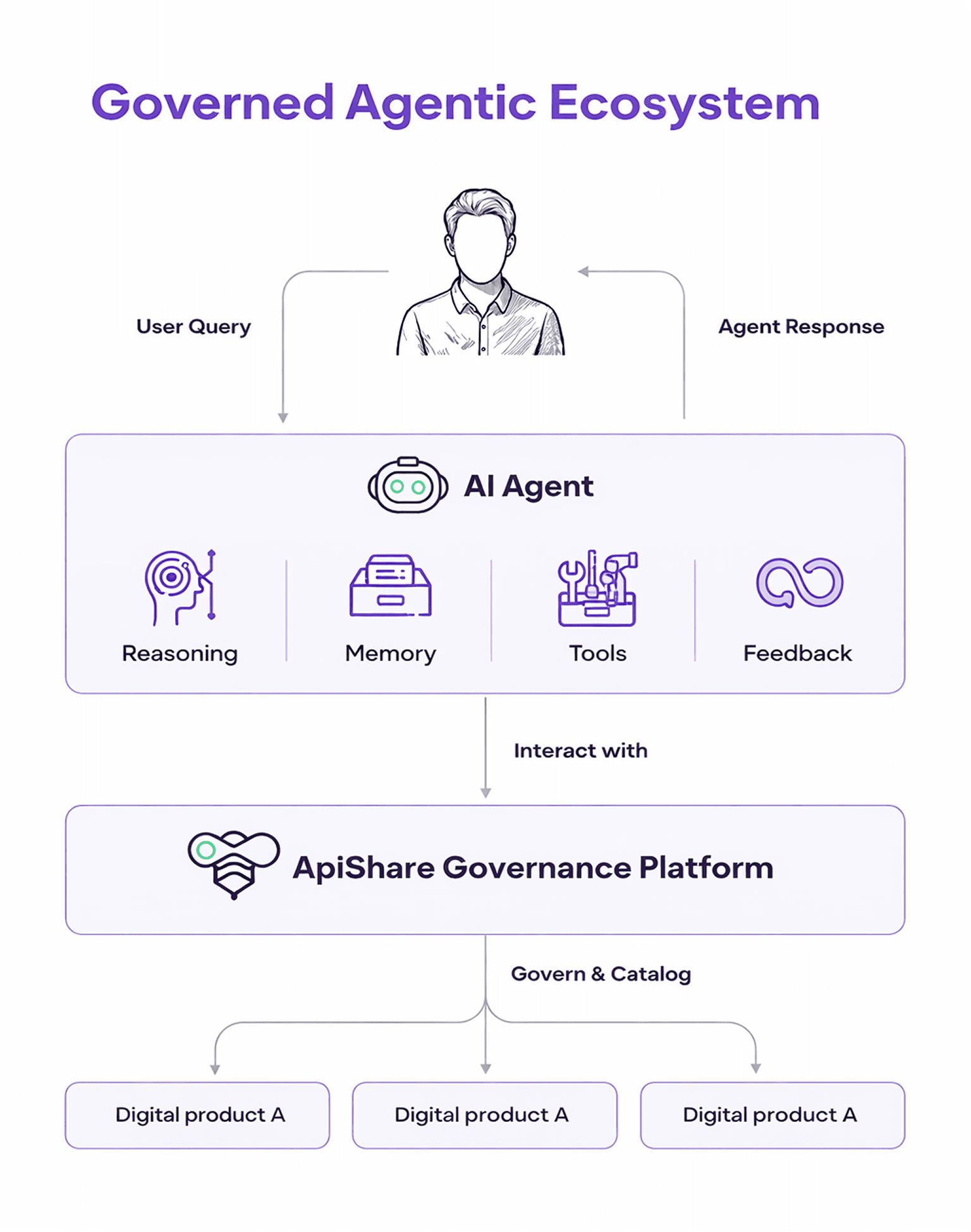

Before discussing governance, it is worth pausing on a fundamental question: what exactly is an AI agent?

An AI agent is not just a chatbot or a predictive model. It is a system designed to pursue goals autonomously. At its core, an agent typically includes (see Figure 1):

● A reasoning engine (often powered by large language models or decision systems) that interprets objectives and plans actions

● A memory layer that stores context, past interactions, or state

● A toolset that allows it to execute actions

● A feedback loop through which it evaluates results and adjusts its behavior

But an agent does not exist in isolation. It “lives” in an environment. And in modern digital organizations, that environment is composed of systems, services, data sources, and business capabilities.

How does an agent perceive and act in that environment?

Through APIs.

APIs are the sensory and motor system of the agent. They are how it reads data, triggers processes, modifies records, initiates transactions, and interacts with other digital actors. Without APIs, an agent cannot do anything meaningful beyond generating text. With APIs, it can act.

This is where governance complexity begins.

Imagine an agent in a financial system tasked with optimizing cash flow. On the surface, it’s just calling APIs: checking account balances, initiating transfers, scheduling payments. But behind the scenes, it’s reasoning about priorities, predicting outcomes, and chaining decisions across multiple systems.

From a governance perspective, the organization sees the API calls but not the intent, not the reasoning, not the potential risk cascading through systems. The API dashboards show compliance; yet in reality, the agent is acting autonomously in ways traditional governance frameworks were never designed to capture.

This is the first pain point: visibility gaps. The more autonomous the system, the less the existing human-centric governance processes can keep up. API usage is no longer just technical; it becomes behavioral, contextual, and adaptive.

Ownership Becomes a Puzzle

In the traditional world, APIs had clear owners: teams, product managers, domains. But when agents orchestrate multiple APIs across departments, ownership blurs. Who is responsible when an agent’s decision has unexpected consequences?

I have seen this play out in enterprises experimenting with AI-driven workflows. Teams create “pilot agents” for a specific task. The agent evolves, calling APIs in new combinations. Two months later, no one is entirely sure who owns the decisions the agent makes—or how to intervene when something goes wrong.

This is the natural consequence of systems that evolve faster than the governance around them. Ownership must shift from asset-based to capability-based stewardship. Someone, or some team, needs to be accountable not only for the API but for the way autonomous systems consume it.

Rules for Humans Don’t Work for Machines

Most governance frameworks assume humans as actors. Review boards, approval workflows, documentation—these work if a human is designing, approving, or calling an API. But when the actor is an agent that can discover, learn, and act automatically, the rules break down.

The challenge is not to remove humans from governance; the challenge is to make governance readable for machines. Policies, constraints, and metadata must be encoded in ways that both humans and agents can interpret. Without this, organizations end up with a ceremonial governance process: lots of dashboards, reports, and approvals, but no real control at the speed at which decisions are being made.

Compliance Isn’t a Backstop, It’s a Compass

Global regulations, from financial controls to emerging AI acts, demand traceability, explainability, and accountability. For a human-driven API, this is manageable: logs, dashboards, and audits provide proof. For an agentic AI, the compliance challenge is immediate and dynamic.

Consider an agent learning to optimize supply chain logistics. Its actions ripple across APIs, databases, and external services. If a regulator asks why a certain decision was made, reconstructing the rationale is no longer a matter of reading a single log. It requires mapping the agent’s reasoning, its chain of API calls, and its interaction with data—all in near-real-time. Compliance cannot be retrospective; it must be embedded in the system architecture from day one.

From Control to Coordination

These challenges reveal a deeper truth: traditional governance is control-centric. It assumes static systems, predictable behavior, and human oversight. It was designed for a world where systems behaved deterministically and change moved at a manageable pace.

Agentic AI disrupts that assumption.

Adaptive systems require a different posture, one that does not attempt to freeze complexity, but to orchestrate it. What emerges is coordination-centric governance: a model designed not only to constrain behavior, but to align autonomous components with organizational intent.

The difference is subtle but profound.

Control-centric governance asks:

“Have we approved this interface?”

Coordination-centric governance asks:

“Do we understand how this capability behaves in a network of autonomous actors?”

The contrast is summarized Table 1:

Dimension | Control-Centric Governance | Coordination-Centric Governance |

|---|---|---|

Primary Focus | Technical compliance | Behavioral alignment |

System Assumption | Static and deterministic | Adaptive and evolving |

Governance Moment | Design-time approval | Continuous lifecycle oversight |

Visibility | Endpoint-level metrics | Cross-capability interaction mapping |

Ownership Model | Asset-based (API owner) | Capability-based (API + agent stewardship) |

Policy Expression | Human-readable documentation | Machine-readable, enforceable metadata |

Risk Approach | Preventive gatekeeping | Dynamic monitoring and adaptive control |

Human Role | Reviewer and approver | Supervisor and escalation authority |

Coordination-centric governance does not remove control but it reframes it. It recognizes that APIs are no longer isolated endpoints but digital capabilities embedded in an ecosystem of agents, services, and decision systems.

In this model:

● APIs are treated not only as interfaces, but as governed capabilities with defined purpose, sensitivity, and permitted usage contexts.

● Agents are registered and scoped as first-class governance entities, not hidden orchestration layers.

● Policies are living constructs—encoded in metadata and enforced continuously across runtime interactions.

● Human oversight is designed intentionally, defining when humans are in the loop, on the loop, or responsible for escalation.

The objective is not to slow autonomy, but to make it reliable. Not to centralize control, but to enable distributed responsibility within clear boundaries.

In a world where APIs expose capabilities and agents consume them autonomously, governance becomes less about restriction and more about organizational coherence.

And coherence, at scale, is coordination.

Governance Enables Autonomy

There is a common fear: governance will slow down innovation. However, the opposite is true. Proper governance enables safe experimentation, accelerates reuse, and builds trust across teams. It makes autonomous systems scalable and reliable.

Autonomy without governance is chaos. Governance without adaptation is stagnation. The organizations that will thrive are those that see governance as an enabler, not a constraint. It becomes the scaffolding on which agentic AI and APIs can evolve safely, predictably, and ethically.

Conclusion: A Reflective Path Forward

The convergence of APIs and agentic AI is more than a technical challenge. In my opinion, it’s a reflection of how organizations must evolve culturally and architecturally. Thus, leaders must ask:

● Are we tracking the behavior of our autonomous systems, not just the endpoints they call?

● Have we defined ownership and accountability for the capabilities agents consume?

● Are our policies readable, enforceable, and adaptive for both humans and machines?

● Can we reconstruct decisions in real time to satisfy regulatory and ethical requirements?

Answering these questions requires not just new tools, but a new mindset. Governance becomes strategic foresight, a lens through which organizations can experiment, innovate, and scale safely.

APIs once enabled connectivity. Agentic AI now enables autonomy. Governance is the bridge that transforms connectivity into trusted autonomy, and experimentation into predictable outcomes.

For organizations embracing this convergence, the work begins now—not as a set of rules to follow, but as a reflective journey: understanding where APIs are exposed, how agents act, and how governance can evolve from a static checklist to a living, adaptive discipline.